April 14, 2026

Jeremiah Grossman

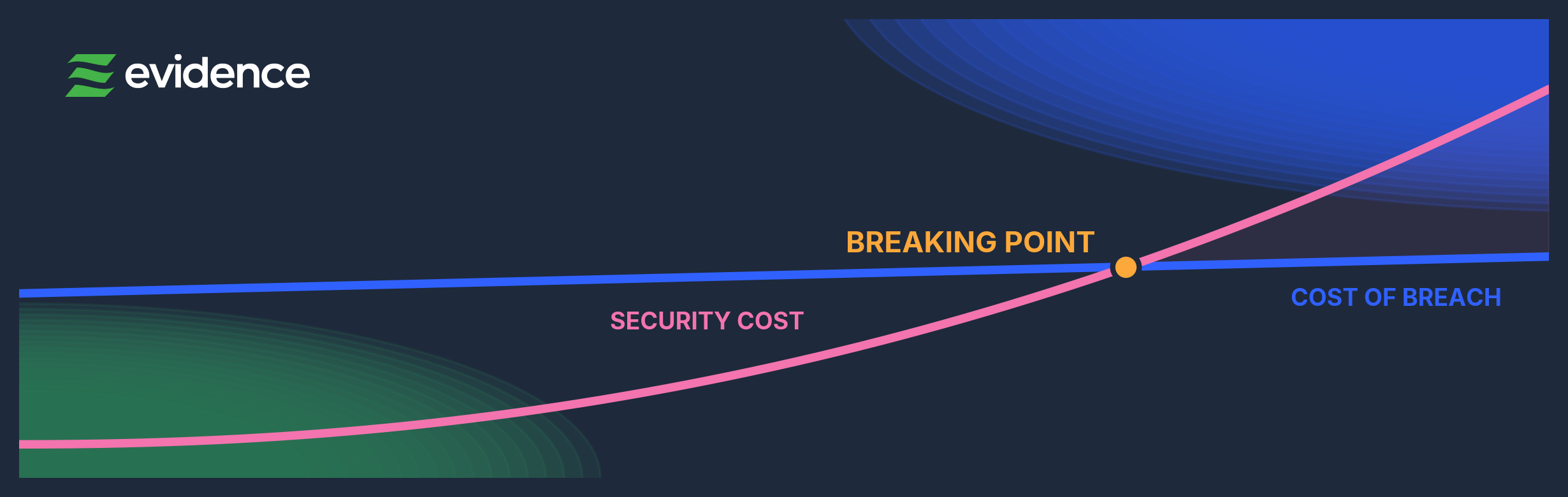

“The traditional licensing model of cybersecurity inevitably drives a point where the cost of security exceeds the cost of breach.”

I was speaking with the head of vulnerability management at a large enterprise. I asked him a simple question: why aren’t you scanning your entire perimeter every day?

His first answer wasn’t surprising, and I’ve heard it for well over a decade. He said “the business” didn’t want to know about even more vulnerabilities they couldn’t fix, at least not anytime soon, if ever, which, in practice, is often a polite way of saying, “Please stop bringing us more expensive and unsolvable problems.”

The second answer caught my attention. He said their scanning vendor, a large and well-known market leader, simply couldn’t scale to the size of their environment. That was somewhat surprising, but not nearly as much as what came next.

He told me that the licensing cost of scanning everything daily would exceed their estimated losses in the event of a breach, especially when modeled over a three-year ROI horizon. Then he added, almost as a footnote: “And beyond that, we’re insured.”

Not said defensively. Just… matter-of-fact.

It took me a moment to process that. Not because it was complicated, but because it made too much sense. Especially in environments where asset counts exceed 6 figures and beyond.

Afterward, I started asking the same question to dozens of other organizations. Not all had reached that point, and some hadn’t even considered the cost of scanning vs the cost of financial loss until prompted. But many had, especially those with larger, more complex environments.

And that’s the pattern.

Attack surfaces have been growing steadily for years, and now, with AI making software faster and cheaper to build, that growth is accelerating. This exposes a structural problem we haven’t had to grapple with as an industry. The traditional licensing model of cybersecurity inevitably drives a point where the cost of security exceeds the cost of breach.

Let that sink in for a moment, because it’s monumental.

Most people assume the size of an organization’s attack surface correlates with the financial loss from a breach but there is currently no study or indication that it does. Actuarial claims data is more largely driven by attack type, market segments, and revenue size. Attack surface size may relate to the likelihood of a breach, or simply reflect the size of the organization, but it’s the business itself, its revenue, and industry that correlates with financial impact.

Financial losses tend to reflect business disruption, operational impact, and data sensitivity, not the number of assets in an environment. Larger environments do not consistently produce proportionally larger losses.

At the same time, security products and services are licensed in the opposite way. More assets, more users, more data, more infrastructure, more cost. It’s clean, intuitive, and for a long time worked well enough. But the world those licensing models were built for has changed.

Environments have expanded from a handful of systems to vast, dynamic, internet-connected ecosystems: cloud, SaaS, APIs, mobile, third-party integrations, and ephemeral compute. What used to be hundreds of assets became thousands, then tens of thousands, and now effectively unbounded.

And this attack surface expansion is accelerating.

AI is making it faster and cheaper than ever to build software, deploy infrastructure, and bring new systems online which is great unless you’re the one responsible for securing it. The cost of creation is collapsing, while the speed of deployment is increasing. The attack surface is growing with it.

Under the current model, that growth translates directly into higher security costs. More environment, more cost, but the same underlying problem. If the environment continues to grow without bound, and security cost continues to scale with it, while downside remains relatively bounded, those two curves will eventually converge.

And when they do, everything changes. It changes how we measure value, how we prioritize, and how we price security, because financial losses from breaches do not scale the way licensing costs do.

That is where meaningful innovation begins. It comes not from measuring everything equally, but from focusing on what actually changes outcomes. It comes not from scaling effort linearly with environment size, but from allocating it intelligently. And it comes not from asking how much we can cover, but what meaningfully reduces risk.

This may sound like a subtle distinction, but it’s not. It is the difference between scaling security and scaling cost. This shift places unit economics at the center, focusing not just on total spend, but on what each additional unit of effort actually produces. If the marginal cost of securing one more asset exceeds the marginal impact on outcomes, the system needs to change, and in many environments, it already has.

You can’t afford to secure everything, even if your spreadsheet says you should. So you have to get very good at deciding what matters. That means prioritizing:

For organizations, this means rethinking how value is measured. Moving beyond coverage and volume toward what actually changes outcomes. For vendors, it means aligning architecture and pricing with results, not just scale.

This is where the industry is heading. It is not moving away from growth, but learning to operate alongside it, and not constrained by scale, but becoming more intelligent in how it navigates it. The future of cybersecurity will not be defined by how much we can cover; it will be defined by how well we decide what matters.