May 1, 2026

Jeremiah Grossman

TLDR: Mythos Preview and similar AI models will find more vulnerabilities and occasionally speed up exploit development, but they do not change how financially motivated adversaries operate. Attackers already have more exposed targets and working exploits than they need, and they optimize for what reliably makes the most money easily, not what is technically novel. Mythos is like giving fishermen better sonar in a barrel already full of fish, and occasionally a better spear. It improves efficiency, but it doesn’t change the outcome.

Every conversation about vulnerability management since April 7 now seems to include Anthropic’s announcement of Mythos Preview, along with the usual mix of fear, uncertainty, and “this changes everything.” Maybe. Maybe not. The dominant narrative suggests AI will flood the world with new vulnerabilities and make exploit development trivial, leading to a kind of “vulnpocalypse.” It’s a compelling idea, but it assumes the bottleneck in real attacks is vulnerability discovery or exploit creation.

It isn’t.

When you look at a financially motivated adversary campaign end-to-end, vulnerability discovery and exploit creation are not the steps where things get especially hard. For adversaries, the problem was never a shortage of vulnerabilities or exploits. For defenders, the problem was always knowing specifically which vulnerabilities adversaries actually use, the ones that lead to real losses, and acting in time.

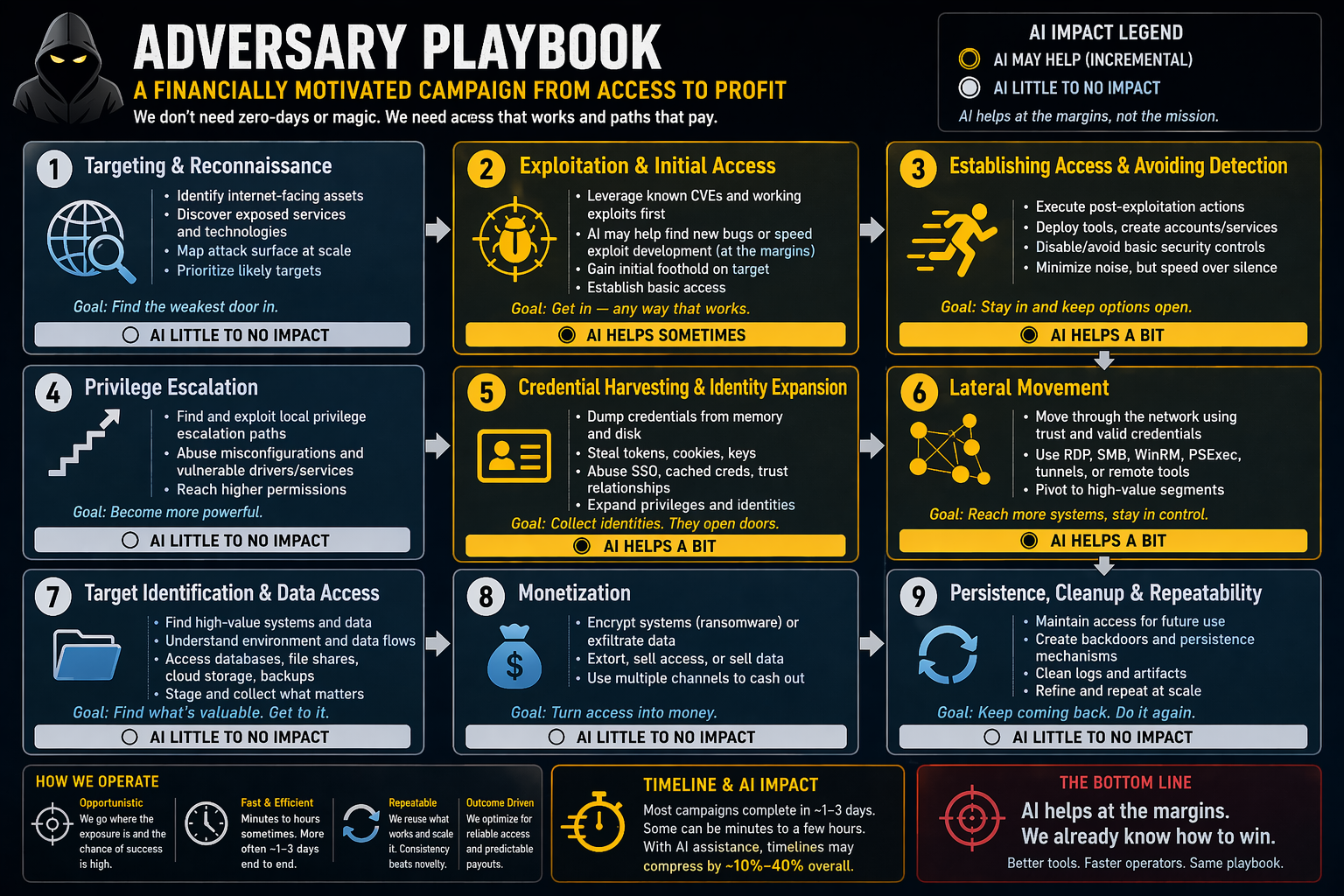

To understand what actually changes, zoom out and look at how these adversaries operate in the real world. One of the most common patterns is simple: exploit a known CVE on an Internet-facing system, gain access, and turn that access into money. Clean, repeatable, and still highly effective. Some of these campaigns can unfold in minutes to a few hours, but more often they take roughly one to three days. These adversaries aren't waiting for better exploits. They already have more targets than they can process, enough working methods to sustain campaigns, and proven ways to convert access into revenue. They are not optimizing for novelty; they are optimizing for reliability, scale, and ultimately profit.

Against that backdrop, Mythos Preview fits into a very specific part of the problem. Traditional scanning tools operate on the known side of the equation, identifying CVEs by matching software versions to published databases. Mythos Preview operates on the unknown side. Anthropic claims it can outperform most humans at finding and, in some cases, developing exploits for software vulnerabilities by analyzing code and system behavior to uncover new bugs, including ones never seen before. The company also claims Mythos Preview has already discovered thousands of serious vulnerabilities across major operating systems and web browsers. If that holds and the technology spreads, we should expect more vulnerabilities to be discovered and somewhat faster paths to exploitation.

But that alone does not change how attacks actually work.

In practice, Mythos Preview complements CVE scanning. It expands the pool of potential findings while increasing the importance of prioritizing what actually matters. In that world, AI does not change what financially motivated adversaries do. It changes how fast they do it. Reconnaissance was already automated at Internet scale, so better discovery does not move the needle. Exploitation may get faster at the margins, especially for newly disclosed vulnerabilities, but adversaries will continue to rely on a small set of proven CVEs that consistently work. Even if AI lowers the barrier to developing new exploits, those will emerge gradually, in small numbers, and get reused like everything else.

Bottom line: Mythos Preview and similar AI models will not break vulnerability management. If you look at breach and financial loss reports, it’s already broken. Vulnerability discovery and the difficulty of exploit development were never the primary constraints for adversaries. Treating them as such is exactly how we ended up here.

For defenders, the answer is not to do more of everything. It is to do the right things faster. Maintain continuous visibility into what is actually exposed on the internet, because adversaries are already good at finding what you missed. Scan continuously. Decide quickly. Patch or mitigate the small set of vulnerabilities that actually lead to financial loss. More findings do not mean more risk, it usually means more work.

What follows breaks down a financially motivated adversary campaign, step by step, from initial targeting through monetization, to separate what is already solved from what actually constrains adversaries, and to show where AI meaningfully changes the equation versus where it simply adds speed and noise.

Low impact: AI doesn’t meaningfully change this step because financially motivated adversaries already operate with automated, internet-scale visibility into exposed systems.

Financially motivated adversaries continuously scan the Internet for exposed systems such as websites, VPNs, appliances, and APIs. This activity is broad, opportunistic, and designed for scale, not precision targeting. They fingerprint software, estimate versions, and map those to known or likely vulnerabilities. The process is highly automated and low-cost, and there is no shortage of targets. In practice, there are more exposed systems than adversaries can profitably exploit.

AI can slightly improve fingerprinting accuracy, infer technologies when data is incomplete, and help prioritize targets that appear more exploitable or valuable. These improvements are real but incremental. Adversaries already operate effectively without perfect information, and better visibility does not materially change outcomes. The constraint isn’t finding targets, but what happens after access is gained.

Medium impact: AI improves speed and efficiency, but does not change adversary behavior because financially motivated adversaries already rely on proven, repeatable exploit methods.

Once a vulnerable Internet-facing system is identified, adversaries attempt exploitation using known CVEs. This process is automated, repeated, and executed across many targets simultaneously. They rely on a mix of publicly available proof-of-concept code, private tooling, and modified exploits tuned for reliability and scale. Operational success is probabilistic, but adversaries don’t require high success rates. Volume compensates, and even a small percentage of successful compromises is sufficient to sustain campaigns.

AI can reduce the time and effort required to generate or adapt exploit code, particularly for newly disclosed vulnerabilities. This may shorten time-to-exploitation and improve success rates at the margin. However, adversaries already have access to abundant, reliable exploit methods and are optimized for reuse. New exploit capability is likely to emerge gradually, in small numbers, and be reused or shared rather than produced at a massive scale.

Medium impact — AI may assist, but does not reduce detectability because this step requires active interaction with systems that inherently generate observable signals.

After gaining access, adversaries stabilize it using persistence mechanisms such as web shells, backdoors, or built-in system tools. They often “live off the land” to reduce obvious indicators while exploring the environment. This phase is interactive and time-sensitive, making it inherently noisy. Even skilled operators generate logs and artifacts as they access files, enumerate systems, and establish persistence.

AI may assist by generating commands, suggesting persistence methods, and adapting actions to the environment. In practice, it often increases the volume and speed of activity, which can increase detectability. However, financially motivated adversaries will still favor these approaches if they improve throughput, since most environments do not detect or respond quickly enough for increased visibility to change outcomes.

Low impact: AI does not meaningfully change this step because escalation depends on environment-specific weaknesses, not a lack of techniques.

Adversaries attempt to increase their level of control by exploiting misconfigurations, reusing credentials, or abusing weak access controls. This step is often straightforward and may not require complex exploitation. In many cases, escalation isn’t necessary if the initial access already provides sufficient reach. Success depends on what weaknesses exist in the environment.

AI may assist in identifying potential escalation paths more quickly by analyzing configurations and access conditions. However, this step remains highly dependent on environment-specific factors. AI doesn’t introduce new capabilities or meaningfully increase the likelihood of success.

Medium impact: AI may assist with analysis, but does not change outcomes because success depends on existing access and identity weaknesses.

Adversaries collect credentials, tokens, and identity artifacts to expand access. This includes extracting credentials from memory, reading configuration files, and obtaining API keys. Identity systems often contain weaknesses such as over-privileged accounts or credential reuse. This step determines how far access can extend across systems.

AI may help analyze configuration data and identify relationships between systems and identities more quickly. This can improve efficiency in navigating complex environments. However, adversaries already have effective tools and playbooks, and outcomes remain constrained by what access and permissions exist.

Medium impact: AI may assist navigation, but does not change outcomes because movement depends on credentials and trust relationships

Adversaries move laterally using valid credentials and legitimate protocols. This allows them to expand access while blending with normal system activity. Movement follows existing trust relationships and connectivity between systems. This step is well understood and widely practiced.

AI may help identify movement paths and interpret environments more quickly. However, adversaries already have established methods for navigating networks. Success depends on access, not guidance.

Low impact: AI does not meaningfully change this step because valuable targets are predictable and well understood

Adversaries identify systems that contain valuable data or operational leverage, such as: databases, file shares, and backups. Enterprise environments follow predictable patterns, making high-value targets relatively easy to locate. Access determines what can be reached.

AI may help analyze data and systems more quickly, but adversaries already know where to look. It doesn’t change what is valuable or accessible.

Low impact: AI does not meaningfully change this step because monetization models are already mature and optimized

Adversaries monetize access through ransomware, extortion, fraud, or selling access. These methods are standardized and supported by mature ecosystems. Success depends on leverage and access, not technical novelty.

AI may assist marginally in analyzing data or automating parts of communication, but it does not change business models or outcomes.

Low impact: AI does not meaningfully change this step because adversaries already operate with established playbooks and scalable workflows

Adversaries maintain access, remove artifacts, and reuse successful techniques across campaigns. Workflows are already designed for scale and repeatability.

AI may assist in organizing and automating parts of these workflows, but adversaries already operate efficiently at scale. Improvements are incremental.